Birdle

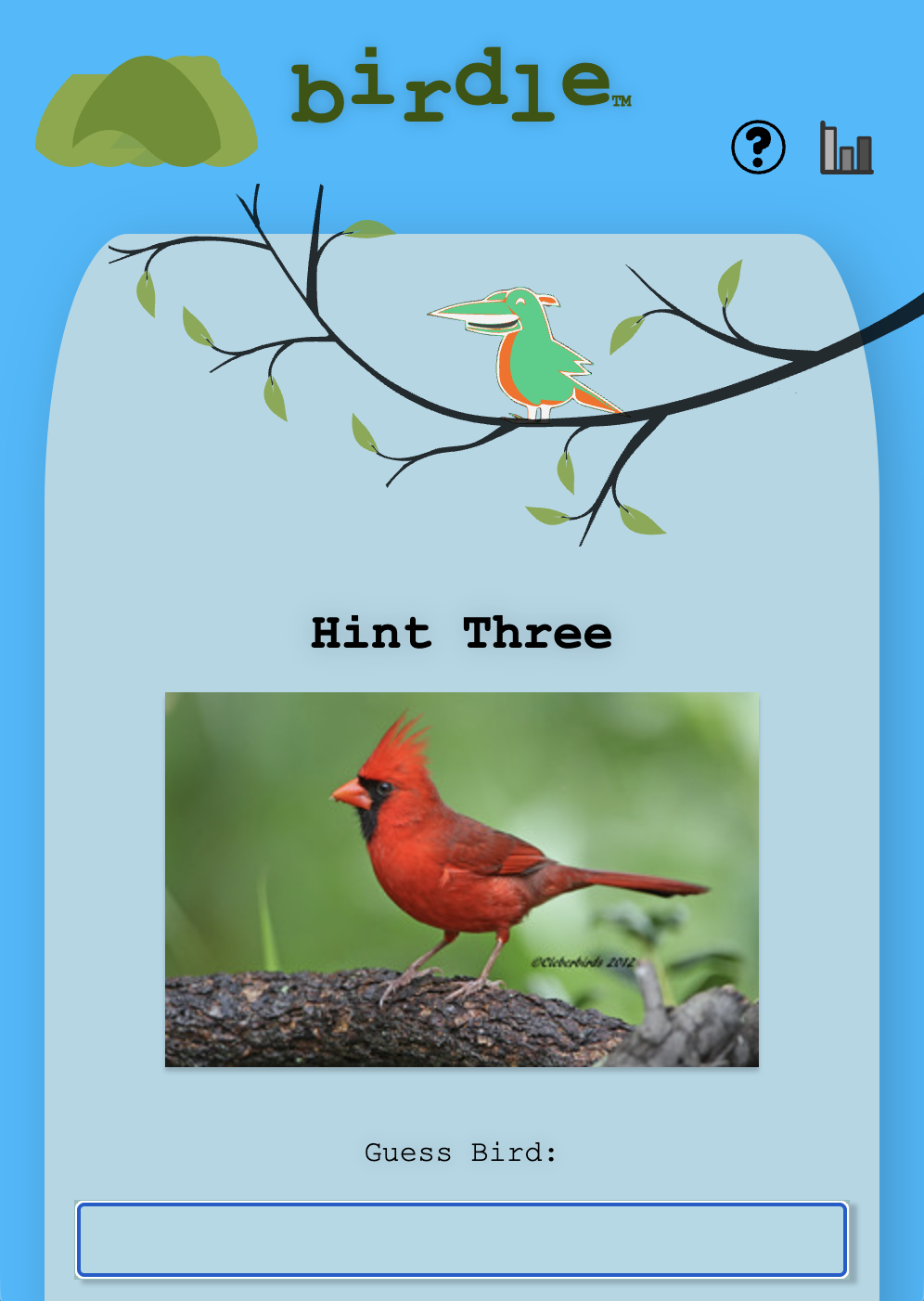

Birdle.fun is an interactive, daily geography and ornithology game inspired by the Wordle phenomenon. Instead of guessing words, players are challenged to identify a "Daily Bird" based on its migratory patterns and global distribution.

The application features a high-fidelity 3D globe where players can visualize species sightings, explore regional bird populations, and track daily challenges. It merges open-source biodiversity data with immersive web graphics to create an educational experience for birders and gamers alike.

Key Features

Daily Challenges: A new species to discover every 24 hours. Rollup / Vite

Interactive 3D Visualization: Real-time rendering of bird locations on a rotatable, zoomable globe. JavaScript (ES6+), Three.js

Geospatial Logic: Intuitive feedback based on distance and direction from the target species. Globe.gl, GeoJSON & JSON

Data-Driven Exploration: Utilizes global bird occurrence data to educate users on avian habitats. Heroku

Brand Journey - A Mobile Brand Adventure

Embrace innovation! I built an interactive storytelling application called Brand Journey that bridges the gap between a patron’s story and a brand’s identity. The goal is Agile Immersion: high-fidelity storytelling that no longer requires a six-month production cycle.

By leveraging Replit’s AI Animation engine, I’ve reduced visual production time from weeks to hours, allowing brands to pivot narratives and deploy cinematic experiences to the edge instantly.

The 2026 Integrated Stack:

Creative Orchestration: Replit Animation (AI-Generated Hero)

Interactive Engine: Three.js / React Three Fiber (Browser-native 3D)

Story & Image Generation: @OpenAI (GPT & DALL-E integration)

Narrative Voice: @ElevenLabs AI (Dynamic, personalized TTS)

Deployment: Next.js 16 + @Vercel (Sub-10ms Edge performance)

This is the future of MarTech: Personalization that caters to the patron, immersion that feels native, and engagement that actually converts.

Tri-Sense

Tri-Sense is an innovative accessibility-focused application designed to bridge the gap between physical gestures and digital communication. Originally conceived as a robust dictionary lookup tool, the project evolved into an ambitious Machine Learning (ML) experiment aimed at recognizing American Sign Language (ASL) through computer vision.

The platform empowers users to interact with language through three distinct sensory channels: visual (text), auditory (vocal pronunciation), and physical (hand motion tracking). By integrating real-time gesture recognition with professional linguistic databases, Tri-Sense explores the future of touchless, accessible interfaces.

Key Features

Intelligent Dictionary Engine: Retrieves comprehensive word data, including parts of speech and phonetics, via the Merriam-Webster API. Java / Spring Boot, PostgreSQL

Multimodal Accessibility: High-fidelity audio playback for pronunciation alongside clear, screen-reader-optimized text. React.js

ASL Motion Tracking: Utilizes advanced computer vision to detect hand positions and translate ASL alphabet gestures into digital text. TensorFlow.js, MediaPipe, FingerPose

Axe-Certified Design: Built with inclusivity at the forefront, achieving "Adequate" accessibility status through rigorous Axe DevTools testing. Axe Accessibility